XML documents

Introduction

Funnelback can index XML documents and there are some additional configuration files that are applicable to indexing XML files.

- You can map metadata classes to elements in the XML structure.

- You can display cached copies of the document via XSLT processing.

Funnelback can be configured to index XML content, creating an index with searchable, fielded data.

Funnelback metadata classes are used for the storage of XML data – with configuration that maps XML element paths to internal Funnelback metadata classes – the same metadata classes that are used for the storage of HTML page metadata. An element path is a simple XML X-Path.

XML files can be optionally split into records based on an X-Path. This is useful as XML files often contain a number of records that should be treated as individual result items.

Each record is then indexed with the XML fields mapped to internal Funnelback metadata classes as defined in the XML mappings configuration file.

XML configuration

The collection's XML configuration defines how Funnelback’s XML parser will process any XML files that are found when indexing.

The XML configuration is made up of two parts:

- XML special configuration

- Metadata classes containing XML field mappings

The XML parser is used for the parsing of XML documents and also for indexing of most non-web data. The XML parser is used for:

- XML, CSV and JSON files,

- Database, social media, directory, HP CM/RM/TRIM and most custom collections.

XML element paths

Funnelback element paths are simple X-Paths that select on fields and attributes.

Absolute and unanchored X-Paths are supported, however for some special XML fields absolute paths are required.

- If the path begins with

/then the path is absolute (it matches from the top of the XML structure). - If the path begins with

//it is unanchored (it can be located anywhere in the XML structure).

XML attributes can be used by adding @attribute to the end of the path.

Element paths are case sensitive.

Attribute values are not supported in element path definitions.

Example element paths:

| X-Path | Valid Funnelback element path |

|---|---|

| /items/item | VALID |

| //item/keywords/keyword | VALID |

| //keyword | VALID |

| //image@url | VALID |

| /items/item[@type=value] | NOT VALID |

Interpretation of field content

- CDATA tags can be used with fields that contain reserved characters, or the characters should be HTML encoded.

- Fields containing multiple values should be delimited with a vertical bar character, or the field repeated with a single value in each repeated field.

e.g. The indexed value of //keywords/keyword and //subject below would be identical.

<keywords>

<keyword>keyword 1</keyword>

<keyword>keyword 2</keyword>

<keyword>keyword 3</keyword>

</keywords>

<subject>keyword 1|keyword 2|keyword 3</subject>

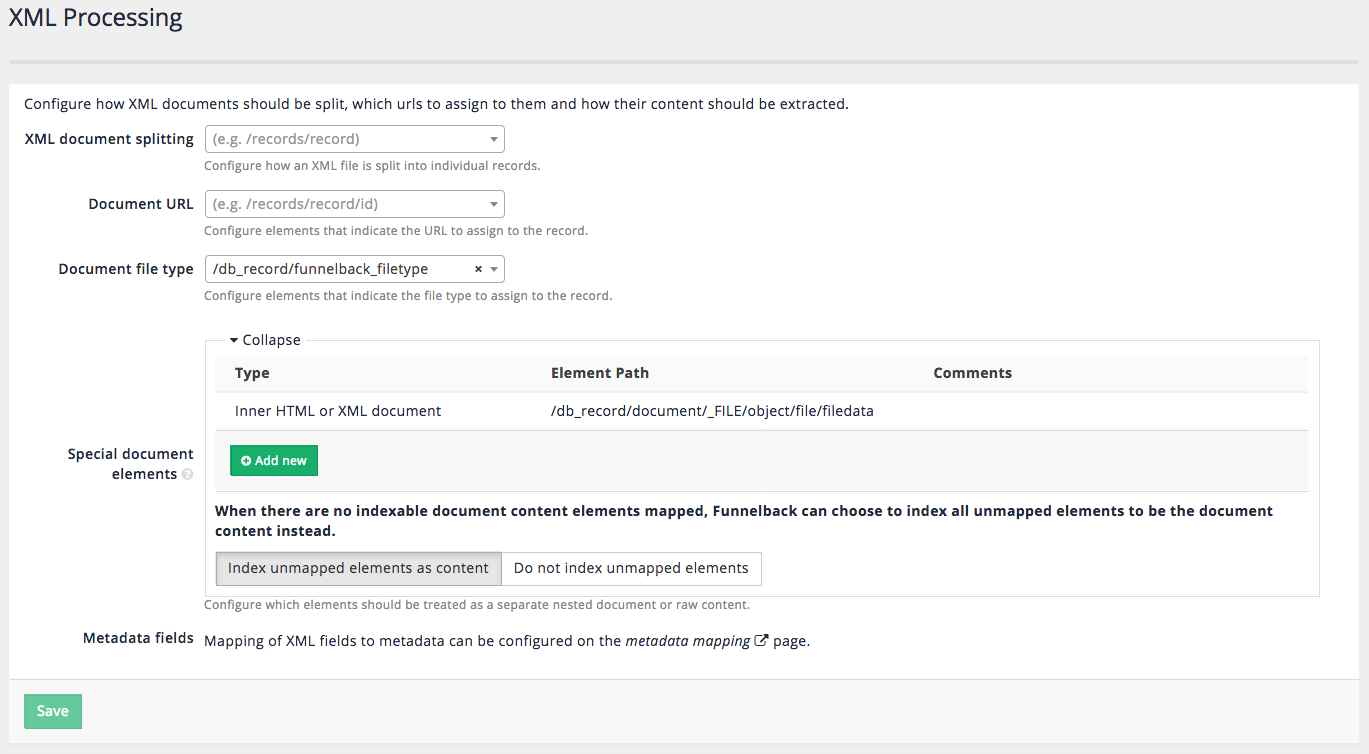

XML special configuration

There are a number of special properties that can be configured when working with XML files. These options are defined from the XML configuration screen, by selecting XML processing from the administer tab in the administration interface.

XML document splitting

A single XML file is commonly used to describe many items. Funnelback includes built-in support for splitting an XML file into separate records.

Absolute X-Paths must be used and should reference the root element of the items that should be considered as separate records.

Note: splitting an xml document is not available on Push collections.

Document URL

The document URL field can be used to identify XML fields containing a unique identifier that will be used by Funnelback as the URL for the document. If the document URL is not set then Funnelback auto-generates a URL based on the URL of the XML document. This URL is used by Funnelback to internally identify the document, but is not a real URL.

Note:

- Setting the document URL to an XML attribute is not supported.

- Setting a document url is not available on Push collections.

Document file type

The document file type field can be used to identify an XML field containing a value that indicates the filetype that should be assigned to the record. This is used to associate a file type with the item that is indexed. XML records are commonly used to hold metadata about a record (e.g. from a records management system) and this may be all the information that is available to Funnelback when indexing a document from such as system.

Special document elements

The special document elements can be used to tell Funnelback how to handle elements containing content.

Inner HTML or XML documents

The content of the XML field will be treated as a nested document and parsed by Funnelback and must be XML encoded (i.e. with entities) or wrapped in a CDATA declaration to ensure that the main XML document is well formed.

The indexer will guess the nested document type and select the appropriate parser:

-

The nested document will be parsed as XML if (once decoded) it is well formed XML and starts with an XML declaration similar to

<?xml version="1.0" encoding="UTF-8" />. If the inner document is identified as XML it will be parsed with the XML parser and any X-Paths of the nested document can also be mapped. Note: the special XML fields configured on the advanced XML processing screen do not apply to the nested document. For example, this means you can't split a nested document. -

The nested document will be parsed as HTML if (once decoded) when it starts with a root

<html>tag. Note that if the inner document contains HTML entities but doesn't start with a root<html>tag, it will not be detected as HTML. If the inner document is identified as HTML and contains metadata then this will be parsed as if it was a HTML document, with embedded metadata and content extracted and associated with the XML records. This means that metadata fields included in the embedded HTML document can be mapped in the metadata mappings along with the XML fields. -

If a field in the XML should be parsed as HTML and treated as the title of the record/result, this field must be surrounded by a root

<html>tag and a<head>tag and a<title>tag. Without the<head>and<title>tags, the HTML parser will treat that field as if it was content in the body of an HTML document, not as a title.

The inner document in the example below will not be detected as HTML:

<root>

<name>Example</name

<inner>This is <strong>an example</strong></inner>

</root>

This one will:

<root>

<name>Example</name>

<inner><html>This is <strong>an example</strong></html></inner>

</root>

Example of an inner HTML document using a CDATA section:

<root>

<name>Example</name>

<inner><![CDATA[<html>This is <strong>an example</strong></html>]]></inner>

</root>

Example of a field parsed as HTML and assigned as the title of the record.

<root>

<name><html><head><title>Department of Family & Support Services</title></head></html></name>

<description>...</description>

<url>...</url>

</root>

Example of a field parsed as HTML using a CDATA section and assigned as the title of the record.

<root>

<name><![CDATA[<html><head><title>Department of Family & Support Services</title></head></html>]]></name>

<description>...</description>

<url>...</url>

</root>

Indexable document content

Any listed X-Paths will be indexed as unfielded document content - this means that the content of these fields will be treated as general document content but not mapped to any metadata class.

For example, if you have the following XML document:

<?xml version="1.0" encoding="UTF-8"?>

<root>

<title>Example</title>

<inner>

<![CDATA[

<html>

<head>

<meta name="author" content="John Smith">

</head>

<body>

This is an example

</body>

</html>

]]>

</inner>

</root>

With an Indexable document content path to //root/inner, the document content will have This is an example; however, the metadata author will not be mapped to "John Smith". To have the metadata mapped as well, the Inner HTML or XML document path should be used instead.

Include unmapped elements as content

If there are no indexable document content paths mapped, Funnelback can optionally choose to how to handle the unmapped fields. When this option is selected then all unmapped XML fields will be considered part of the general document content.

Example: electronic books

Let's say you had a number of XML files representing electronic-books similar to:

<book>

<info>

<title> The Adventures Of Sherlock Holmes </title>

<author> Arthur Conan Doyle </author>

</info>

<contents>

<chapter>A Scandal in Bohemia</chapter>

<chapter>The Red-headed League</chapter>

...

<chapter>The Adventure of the Copper Beeches</chapter>

</contents>

</book>

Metadata

To map this XML structure to metadata classes for the author (to bookAuthor), title (bookTitle) and chapters (bookChapter), create mappings for the following :

- map the

//authoror/book/authorX-Path to a metadata class namedbookAuthor. - map the

//titleor/book/titleX-Path to a metadata class namedbookTitle. - map the

//chapter,//contents/chapteror/book/contents/chapterX-Path to a metadata class namedbookChapter.

When this data is indexed, the text from these elements will be indexed and assigned to the specified metadata classes.

Presentation

An XSL stylesheet can be defined to present the XML record as a HTML webpage, so that a page is rendered when clicking on the search result link.

- Create the

template.xslstylesheet to convert the XML into HTML. - Change the collection's search forms to use the

cache_urlinstead of thelive_url.

For more details and caveats, see the XML and XSL section of the cache controller documentation.

Crawling XML files

To crawl XML files you will need to ensure that the crawler.parser.mimeTypes parameter includes text/xml as one of the MIME types the web crawler will accept.

XML files and local collections

When indexing XML files in a local collection it may be necessary to set the -forcexml indexer option as any XML document that can be interpreted as HTML will be parsed using the HTML parser. This is because the Content-Type HTTP header is used to decide which parser to use for these files, and if it is not present then any XML file containing a <html> tag will be parsed using the HTML parser.