Database collections

Introduction

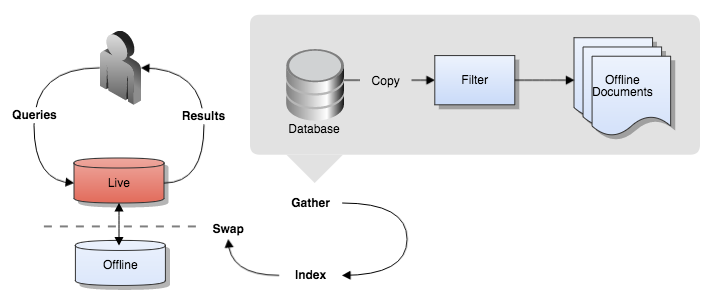

A database collection indexes the data from a relational database. Funnelback uses an SQL query to obtain data from the database. Each row returned by the query is saved as a separate XML file.

Before you start

Funnelback's database connector indexes the single table of results returned by an SQL query as separate XML records.

Because of this, the table that is returned by the query must be fully denormalised (Funnelback can't resolve any ID references to other database records and has no concept of the relational database).

The best way to achieve this is to set up a database view containing all the (denormalised) fields that are relevant to the search that is being set up.

For databases that have multiple types of content it is often necessary to set up several Funnelback database collections that index each of these types separately.

Supported databases

The database connector utilises a JDBC database driver to connect to the database system. Funnelback ships with a driver for PostgreSQL. Indexing of other databases require installation of an appropriate JDBC driver.

See: Installing JDBC drivers for further information on supported databases and driver installation.

Configuring a database collection

Create the collection

Directory collections are created by selecting create collection and selecting database from the administration interface.

The database collection is defined by configuring the following properties:

- database driver: a valid JDBC database driver, installed on the Funnelback server (see above)

- URI: JDBC URI of the database

- SQL query to select the rows

- primary key column in the SQL query

- user name and password for the database

Test the connection settings by clicking the check database connectivity button.

This button allows a quick check of the database configuration to be performed to ensure Funnelback is able to connect.

Clicking the button first causes a connection to be established based on the database connection configuration options. If the connection fails, an error indicating the type of failure and suggested next steps will be provided. If the connection succeeds, the specified SQL query will then be executed to ensure it is valid and that it contains the specified primary key column.

After creating the collection, perform an initial update of the collection.

Define database field mappings

After a successul update has run, configure metadata mappings for the database fields. The update of the database collection downloads each row from the SQL query and converts it to an XML record.

The fields that were detected in the SQL results should be listed amongst the XML sources. Map the relevant fields to metadata classes. Relevant fields include:

- fields that you wish to display in the search results summaries, or use for faceted navigation.

- fields that contribute something useful to the record's searchability. e.g. keywords, types.

- If there is a field containing a URL that should be used as the target when the result is clicked on then this should be mapped as the document's URL using the advanced XML configuration options available from the administer tab in the administration interface.

Rebuild the index by selecting reindex the live view from the advanced update option on the update tab in the administration interface.

After the reindex is complete metadata should be available for display in the search results.

Configure search results

Search results can then be configured. This involves:

- Defining the metadata classes to return in the search results by setting the summary fields (

-SF) query processor options. - If using a Freemarker search template, customising the template to display the metadata fields in the search results template within the

<@s.Results>code block.

Live links

By default the URL used in database collections is a system-assigned URL. If the default system-assigned URL is not modified (for example by filters) the modern UI will use the cache link as the live URL. This will result in the XML of the record being shown to the user when a result is clicked. You can use XSLT on the cache controller to style the response.

Modifying database records

Database records can be modified (locally) prior to indexing using the filter framework.

Configuration options

The following database options are available for database collections. These options can be set in the collection.cfg.

| Option | Description |

|---|---|

| db.bundle_storage_enabled | Allows storage of data extracted from a database in a compressed form. |

| db.custom_action_java_class | ⚠ Deprecated. Use the filter framework instead. Allows a custom java class to modify data extracted from a database before indexing. |

| db.full_sql_query | The SQL query to perform on a database to fetch all records for searching. |

| db.incremental_sql_query | The SQL query to perform to fetch new or changed records from a database. |

| db.incremental_update_type | Allows the selection of different modes for keeping database collections up to date. |

| db.jdbc_class | The name of the Java JDBC driver to connect to a database. |

| db.jdbc_url | The URL specifying database connection parameters such as the server and database name. |

| db.password | The password for connecting to the database. |

| db.primary_id_column | The primary id (unique identifier) column for each database record. |

| db.single_item_sql | An SQL command for extracting an individual record from the database |

| db.update_table_name | The name of a table in the database which provides a record of all additions, updates and deletes. |

| db.username | The username for connecting to the database. |

| db.use_column_labels | Flag to control whether column labels are used in JDBC calls in the database gatherer |

| db.xml_root_element | The top level element for records extracted from the database. |

| filter.classes | Specifies which java classes should be used for filtering documents. |